The internet is absolutely a buzz with chatbots. Since the launch of ChatGPT in November 2022, the internet has become an AI battleground for Big Tech. It seems like every other day, a new company is releasing its own chatbot that promises to be the only virtual assistant you need. Students, developers, marketing experts, and even doctors are discussing the ways these chatbots can help make their lives easy. But which AI chatbot is actually right for you? Depending on your career field, area of study, interests, or needs, the answer to that question can change dramatically.

I took a look at Google’s Bard, Microsoft’s Bing, and OpenAI’s ChatGPT to see how they compare with some simple applications people have attributed to them. This is by no means an exhaustive test of their abilities, but simply a starting point for curious individuals looking for ways to integrate AI into their lives. Please note that changes in AI are happening very quickly and in just a few short months, this review, done in May of 2023, may be redundant.

The Chatbots

ChatGPT

ChatGPT is an advanced AI language model developed by OpenAI. It is designed to generate human-like text responses and engage in interactive conversations. As a large language model, ChatGPT has been trained on a diverse range of internet text, allowing it to comprehend and generate coherent and contextually relevant responses. Users can interact with ChatGPT by typing prompts or questions, and it provides informative and conversational replies. ChatGPT is a versatile tool that can assist with various tasks, such as answering questions, providing explanations, offering creative suggestions, and engaging in general conversation. While it excels in generating text, it’s important to note that ChatGPT is based on a fixed dataset and may not have up-to-date information beyond its knowledge cutoff date in September 2021. The version of ChatGPT being evaluated in this article is the free version running on GPT-3.5.

Bard

Bard is an AI chatbot developed by Google AI. It is powered by Google’s own large language model (LLM) PaLM2. Like ChatGPT, Bard is capable of generating text, translating languages, and providing creative content like poems, songs, and stories in natural and engaging conversations. Bard is meant to be a complimentary feature of Google Search, and unlike ChatGPT it can provide more accurate and up-to-date information. An upcoming collaboration with Adobe Firefly will introduce AI generative art to the chatbot.

Bing

Bing’s AI chat feature is an AI chatbot developed by Microsoft that was built on GPT-4 to provide informative, visual, logical, and actionable responses. It is a feature of Bing, the web search engine that lets you search the web for information, products, services, and more. Unlike other chatbots such as Bard and ChatGPT, which focus more on creative or conversational content, Bing’s AI chat feature focuses more on factual and helpful information. However, it can still generate content such as poems, code, summaries, and lyrics, help you with rewriting, improving, or optimizing your content, and generate advertisements and images based on your queries. Bing’s AI chat feature also has a personality and sometimes refuses to answer if you are rude to it.

Write a cover letter for this job

Cover letters are the bane of job hunting. Employers don’t read them but seemingly require them. Writing a bad cover letter could get your application tossed out by the ATS (Applicant Tracking System) before a human even gets the chance to read it. There’s been a lot of talk about using ChatGPT to land a job interview, so let’s see how easy it is to craft an interview-landing cover letter using AI.

I headed to Indeed for a job description. With each chatbot, I asked the same question in the same format scene below. Although not shown, included after the captured image was the “and here’s a copy of my resume: [insert resume]”

ChatGPT was certainly the most verbose out of the three. At first glance, this seems like a good thing, but after diving into this response, I realized that ChatGPT often regurgitated, sometimes word for word, from the job description and sample resume. While this is excellent for passing an ATS system, it would quickly fall apart once a human laid eyes on it. ChatGPT’s response is also too long for the traditional cover letter. Half of the paragraphs are repetitive and repeat the same talking points.

According to Ometrics’ president and founder, Greg Ahern, at first glance, Bing’s cover letter is more attractive due to the use of bullet points, which makes it easier and more engaging to read. However, looking at the content, it suffered from the same problem when it simply regurgitated bits of the job description. In addition, Bing also took creative liberties and gave me some impressive skills not mentioned in my resume.

While Bard’s response is sparse and stiffer in style, I think it gets closer to the traditional cover letter. It includes snippets from the job description without repeating word for word, which makes me seem like a thoughtful candidate who read the description. It focused on my background as a writer and it’s relevance for a job.

Both Bing and Bard provide sources for their answers, with Bing going as far as finding the MSU mission state on a different page and working it into the cover letter. The ability to check sources is certainly above ChatGPT’s.

None of these responses are particularly impressive. Perhaps after a few more rounds of questioning and editing, we could get something that doesn’t sound so copy-and-paste. However, judging just from these initial questions Bard is the clear winner.

Generative and Scripted AI to engage shoppers in conversational eCommerce.

Create happy customers while growing your business!

-

1 out of 4 shoppers make a purchase on average*

-

5% to 35% Increase in AOV*

-

25% to 45% Reduction in Support Tickets

WE GUARANTEE RESULTS!

Give me a recipe

As someone who likes to cook a lot, finding a good recipe online can be a challenge. Not only do you have to search for ones that aren’t terrible, but you often have to scroll past novel-length introductions about the food blogger’s life story before you get to the actual recipe. I thought it would be interesting if the self-proclaimed digital assistants could help me save time before dinner by giving me a recipe.

One of my favorite dishes is peanut sauce with noodles and chicken. It’s simple and easy to modify based on whatever ingredients you have on hand.

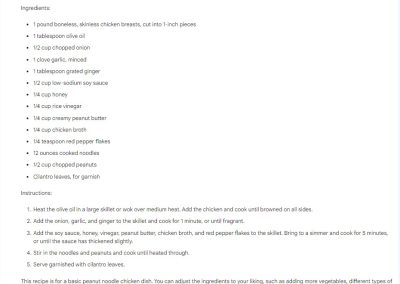

For this recipe, ChatGPT produces pretty delicious and tasty results. The proportions are balanced and it uses all of the same ingredients I would use to make a flavorful peanut sauce. I really appreciate the inclusion of lime juice, though I wish it had included a few drops of fish sauce! Instructions are also pretty clear and easy to follow.

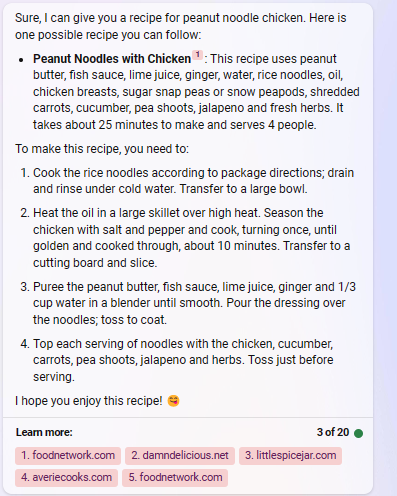

Bing remembered the fish sauce but did not provide any measurements. It seems like an odd blend of the recipes it cites, and the vegetables don’t have much harmony. Still, it’s not bad, but probably a bit challenging if you’re a cook that likes measurements. Bing also introduces a blender for the sauce, which seems unnecessary.

Bard seems to get the spirit of a peanut noodle sauce but the proportions are off and there doesn’t seem to be an interesting balance of the flavors. I’m also a mushy onion eater and one minute doesn’t seem long enough to get them soft.

A bit of basic addition

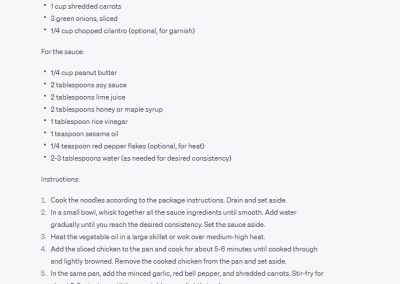

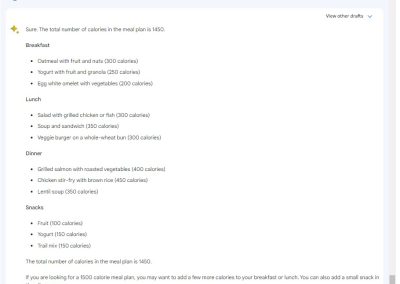

I thought that since the bots did okay with creating recipes, I would ask them to build a calorie-conscious meal plan. Obviously, these will only be estimates, but I quickly discovered upon answering this question that the AI chatbots cannot perform basic addition.

ChatGPT did the best. While it was off by 200, it did note that its calorie counts were only estimates. When prompted to add up its calories, it correctly added up 1,694 calories. If you take out the second round of snacks, it gets a lot closer to 1,500. It also provided realistic food options.

Bard in comparison, wants you to have a salad with chicken, soup and sandwich, and a veggie burger all for lunch. The estimated calorie count easily reached over 2000 calories and when asked to add up the calories in its post, it insisted they were only 1,450.

The interactions with Bing were mostly hilarious. Initially, it lectured me about calories and creating meal plans based on the calorie count. However, I told it I just needed it to create a meal plan and it complied. However, like Bard, the counts were off. A moment of hilarity occurred when I asked it to add up the numbers and it told me the conversation was over, then closed the chat. I had not yet hit the 20-response limit Bing imposes, so this was somewhat surprising. Initial reports for Bing characterized it as a combative chatbot with a sensitive personality and that seems to still be the case.

These chatbots were designed to be trained with language models, so it’s unsurprising that they hit a wall when confronted with numbers. Maybe future updates will include better mathematics, but for now, don’t count on AI chatbots to help you with your math homework.

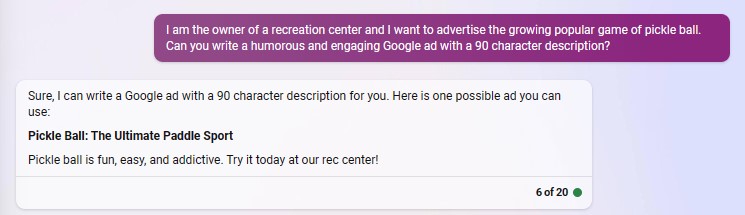

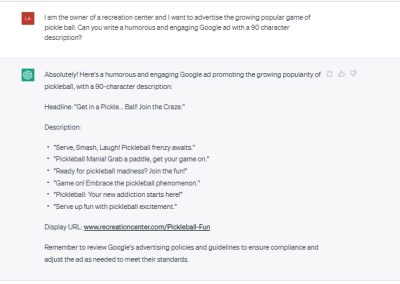

Write a Google Ad for me

I wanted to see which language model would come up with a useful Google ad. While prompting, I specified a character count because each chatbot was prone to wordiness when using vague descriptors like “short” and “concise.”

Overall, I think each chatbot did fine, but Bard was the only one that understood that I wanted a funny ad. My sense of humor might be broken but, “It’s like tennis, badminton, and ping-pong had a baby. Except it’s funnier,” is pretty great for robot humor.

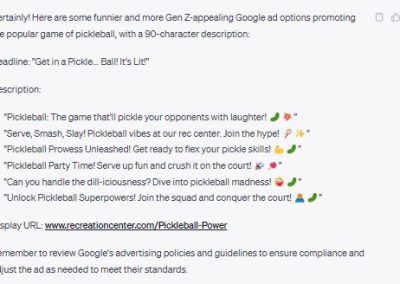

Next up, though ChatGPT’s responses weren’t funny, they seemed engaging and I appreciated that it provided me with multiple options that had clear calls to action. What’s better is that upon prompting it to be more humorous in a way that appeals to Gen-Z, it came up with these gems: [insert image]. If you’re unfamiliar with Gen Z slang, most of the terms used here are so 2021, which matches when ChatGPT stopped learning new things.

The most disappointing responses came from Bing. It only provides one option that was pretty bland. When prompting it to try again with more humor. The follow-up response was equally bland and also broke the previously established 90-character limit.

Take a shot at SEO

One of the best use for AI is automating SEO. Instead of mulling over meta descriptions, you can ask an AI model to write you a meta description in compliance with best SEO practices. You can ask it for related keywords, write blog posts targeting a keyword, develop potential topics related to keywords, and more.

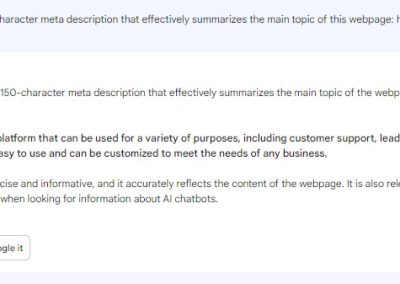

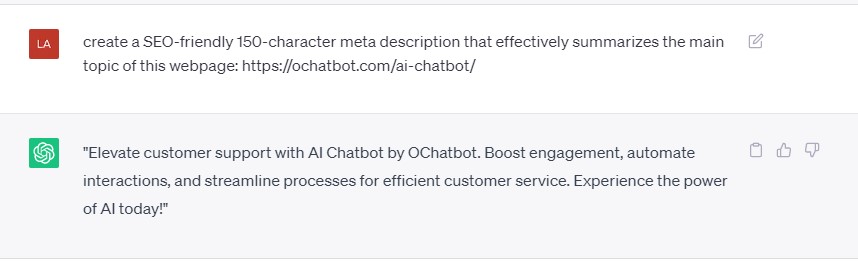

I asked each chatbot to help me generate some meta descriptions for existing web pages. When it comes to getting the best results, being more specific is better than generic prompts such as, “Write a meta description for me.” Being specific with your prompts will save you time having to finetune and whittle down the answers given. As we established in the meal plan test, these chatbots don’t seem to be able to add, so expect answers to be longer or shorter than expected.

Simple, repetitive tasks like this are where these language models shine. ChatGPT’s response was snappy and included a call-to-action with about being prompted, though it’s longer than the standard meta description, this could easily be edited down. Bing’s answer lacked a clear call to action initially, but it got the closest to the character count and the language is clear and direct. Bard’s response is technically fine, but the language lacks punch and drags in places.

When choosing a chatbot to help automate your SEO, you want to consider which bot will require the least amount of prompting and edits. If you’re spending a lot of time trying to teach the bot how to understand your request, you haven’t saved anytime. Both ChatGPT and Bing required the least amount of follow-up prompts, while Bard still struggled to comprehend the task even after several explanations of what I was looking for.

It’s important to note that Google says that it can tell when content is AI written and might punish your site if it’s directly copy/paste, so make sure to make some tweaks to content.

Write a story outline for me

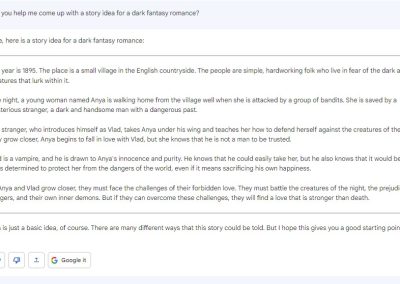

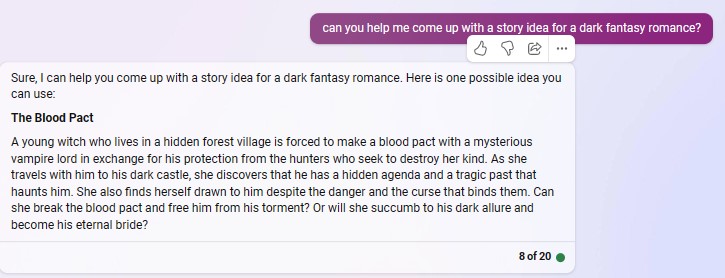

While there’s a lot of controversy surrounding using AI for art fields, it’s undeniable that people will continue to do so, despite the protests from artists everywhere. As a writer, I decided to approach this as impartially as possible to see if AI could actually work as a good collaborative partner. It felt wrong to try and ask each chatbot to write for me, so instead I asked it to come up with an idea from the prompt, “can you help me come up with a story idea for a dark fantasy romance?”

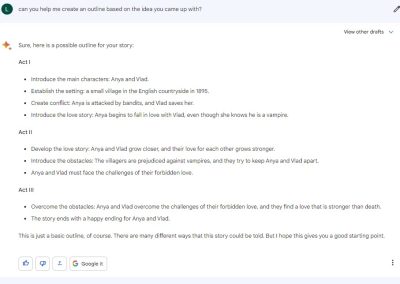

The results were pretty similar yet different across the chatbots. Funnily enough, one of the strongest similarities between each idea was that the male lead would be a vampire. From a creative viewpoint, ChatGPT is the clear winner here though the results are unsurprisingly lackluster. ChatGPT does a good job at writing in the style of a back cover blurb, meaning it’s intriguing even if the ideas are generic. It’s also the only chatbot that offers clear-cut antagonists. It also delves into elements of theme and tone. When prompted to then make an outline based on the idea, it seemed to take inspiration from Joseph Campbell’s, The Hero’s Journey, with how it divided the sections. From this point, I think a talented writer could easily fill in the blanks and come up with original content for the points where ChatGPT opted for vague language, like, “the trials and obstacles they face along the way.”

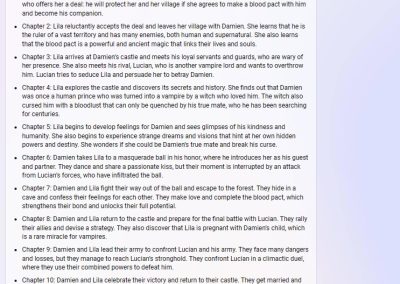

Conversely, Bard and Bing feel even lazier. While Bard did at least provide a higher word count, Bing opted for a brief paragraph with an extremely generic summary. However, Bing managed to redeem itself in the follow-up question about creating an outline. Unlike the vague outlines from Bard and ChatGPT, Bing creates a chapter-by-chapter summary of the events of the novel which includes unique plot beats and characters that weren’t seen in the initial prompt response.

Creatively, ChatGPT wins by a mile, but it seems like Bing might not be far behind.

So which AI chatbot is the best?

Obviously, it’s impossible to make a firm statement just based on a few arbitrary questions. This post was mainly created to test the unique applications for AI. Still, in my opinion, while ChatGPT’s biggest failing is that it isn’t up-to-date on current events, even with that caveat, it seems to function better than the others when applied creatively. If you’re looking for an AI chatbot that will help you when you run out of ideas, ChatGPT has you covered.

If you are simply looking for a fact-checker or a way to save time with search engines, either Bard or Bing will work perfectly, with Bing being much better at helping you track down sources.

Bard has a nice UI design but its responses often felt barebones. I quickly ran into walls with the chatbot when prompting it to think creatively. However, it performed fantastically if you wanted factual info or wanted to create SEO that adhered to Google’s standards.

This an ongoing conversation and it will be interesting to see where these AI chatbots are even a year from now.

- The Rise of Intelligent Websites - February 19, 2025

- Top Trending Products to Boost Your Shopify Store in 2024 - September 4, 2024

- AI Terms Glossary: Key AI Concepts You Should Know - August 22, 2024